I’m writing this on an airplane while headed to the heart of Wi-Fi, Silicon Valley. These are some random thoughts so please bear with the lack of formatting and general disregard for the English language. I’m not a Lee Badman or Marcus Burton or Ben Miller that can just pound out an award winning essay in short order.

Multi-User MIMO is fancy new technology to enable an access point to transmit to multiple client devices simultaneously. Pretty cool stuff. But why? Many mobile devices only support 1 spatial stream (SS) and at best, 2 SS. So if you have a bad ass AP that is 4×4:4 but all of your client devices are 1×1:1 you paid WAY too much for an AP. MU-MIMO fixes that. With that introduction to MU-MIMO, here are some thoughts.

#1

There is no Wave 1 or Wave 2. There is no standard for either. It was invented to delineate between first and second generation of 11ac. It is generally accepted that Wave 2 includes support for MU-MIMO. We (marketing people) have to put a label on it and when MU-MIMO APs hit the street we’ll call them Wave 2. Just remember that its a made up term with no real standard.

#2

Why is there a third number representing the capabilities of a MIMO AP? 2×2:2. 3×3:3. Well, its because the third number hasn’t always been the same as the first two. First gen 11n was 3×3:2. Cisco has a 4×4:3. When it comes to flat-out speed, the last number (spatial streams) is the most important. So the max data rate of a 4×4:3 and a 3×3:3 is exactly the same.

There is another variable with 11ac – how many multi-user streams an AP supports and it won’t always be the same as spatial streams. Why is that? More on that later.

But because of that, I have proposed a new nomenclature to indicate number of MU streams; adding a 4th digit. For example, 4×4:4:3.

– 4 Transmit Chains

– 4 Receive Chains

– 4 Single-user Spatial Streams

– 3 Multi-user Spatial Streams

Now, I don’t have any skin in the game whether this is adopted or not. But its an easy way to show capabilities.

#3

MU-MIMO requires client support. Look for them to start hitting the street by Q3 2015.

#4

With regards to radio technology, single-user MIMO and multi-user MIMO are basically the same thing. Both require the AP to do some bad ass math to decorrelate the signals so they look different to each receiving antenna. Whether the receiving antennas are on one device (SU-MIMO) or on multiple devices (MU-MIMO) doesn’t really matter. The really smart people that think of this kind of thing knew this could be done a long time ago.

So why is MU-MIMO just now happening? First, it requires feedback from the clients. It was difficult enough to get clients to support explicit TxBF feedback. Second, the MAC had to be figured out.

#5

The MAC is whack. One of the greater challenges with MU-MIMO is how the MAC is going to be handled. So, every time a device transmits (unicast) it requires an ACK. A Wi-Fi device can either transmit or receive but never both at the same time. So, the AP transmits three unicast transmissions at the same time. The ACKs CANNOT all come back to the AP at the same time or you have a collision. MU-MIMO doesn’t work in the upstream. So you have to delay some ACKs etc. Frames are varying sizes. Frames are sent at varying data rates. This is getting complicated.

#6

Each MU stream can be a different MCS (data rate) but cannot be different Tx power. As MCS goes up, legal Tx power goes down. (Why is beyond the scope of what I have time to type right now. I have a PPT I’m supposed to be working on. Google PAPR). So if one client can Rx at MCS 9 but another can only Rx at MCS 7, the Tx power has to be the lowest of the two (MCS 9). BUT, if the power is lower than MCS7 would normally be. Is it enough power for the other client at MCS 7? Or, should the rate have dropped down to a lower MCS? I know that sounded really confusing. Here’s the summary: Proper rate selection will be a HUGE differentiator among vendors.

#7

Keys to MU-MIMO performance: Rate and group selection. Before a Wi-Fi device transmits it must determine the fastest rate it can successfully transmit to the receiver. Too slow and its wasting air time. Too fast and the STA won’t receive properly and will have to retry. Mix this with the power problem in thought #6 and an AP has its hands full figuring out the proper data rate.

Before a MU-MIMO AP transmits to multiple clients it must look at the list of all clients that need data at that moment. Next, it has to choose the right combination of client devices where the AP can successfully decorrelate (make look different) the signals for each client.

MU-MIMO gets more efficient with more clients spread out over a larger area because it has more selection freedom.

#8

MU-MIMO is a bandage and generally kinda sucks. SU-MIMO (regular MIMO) is perfectly efficient. If you go from 2×2:2 to 4×4:4 you get double the performance. MU-MIMO has so many efficiency problems that you don’t get nearly the same benefits. If it were possible to make a 4×4:4 mobile phone MU-MIMO goes away.

#9

Transmit beamforming will go away. Once your network has the bulk of the clients MU capable, why would you ever use precious AP Tx chains to gain 3-6 dBs? You won’t. As much as MU-MIMO sucks, TxBF is worse. Sorry about that.

#10

In #4 I mentioned that SU and MU-MIMO are basically the same thing. There is one major difference though; point of view. A 4×4:4 AP can send 4 streams to a 4×4:4 client all day long. BUT, a 4×4:4 MU-MIMO AP can only select 3 MU clients at the same time. Why the contradiction? Because a receiving 4×4:4 client can correlate all four signals it “sees”. It can compare them to each other, do some PhD math and get all four data streams. However, if you are sending MU data to multiple clients, they cannot compare each signal to each other. They lack the perspective that each STA has. As an aside, this is why MU-MIMO requires client feedback but SU-MIMO doesn’t.

You WILL see vendors touting a 4×4:4:4 AP in the future. My employer will be one of them. However, the 4th MU stream is luck. 3 streams can be controlled and IF it works out that the 4 “lands” on a client that needs data, 4 MU streams could be sent. The likelihood of this happening increases as client count and client to client distance increases.

As an aside, I can already see TMEs (Technical Marketing Engineers) in a lab walking around with a mobile phone trying to figure out how to get 4 MU streams. 🙂

So will you see test that show a 4×4:4:4 AP sending 4 MU streams? Sure. Will you see it in real life? We shall see but the math says 3 MU streams is all you will really get.

Conclusion

This technology is so complicated that an entire blog could be written on every aspect of it. I hope this helps clear up some myths and misinformation. Would love to hear comments and questions.

GT

@gthill

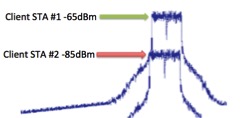

All Wi-Fi performance is directly related to Signal to Interference + Noise Ratio (SINR). Ideally you want as much signal vs. interference as possible (to a point). When two Wi-Fi signals arrive to the client at the same time, as long as one is sufficiently stronger than the other, the stronger of the two signals will be deciphered and processed. How much stronger depends a lot on the chip itself, but if there were a 20dB difference the stronger signal would be received and deciphered perfectly. The weaker signal just gets treated as noise.

All Wi-Fi performance is directly related to Signal to Interference + Noise Ratio (SINR). Ideally you want as much signal vs. interference as possible (to a point). When two Wi-Fi signals arrive to the client at the same time, as long as one is sufficiently stronger than the other, the stronger of the two signals will be deciphered and processed. How much stronger depends a lot on the chip itself, but if there were a 20dB difference the stronger signal would be received and deciphered perfectly. The weaker signal just gets treated as noise.